Introduction

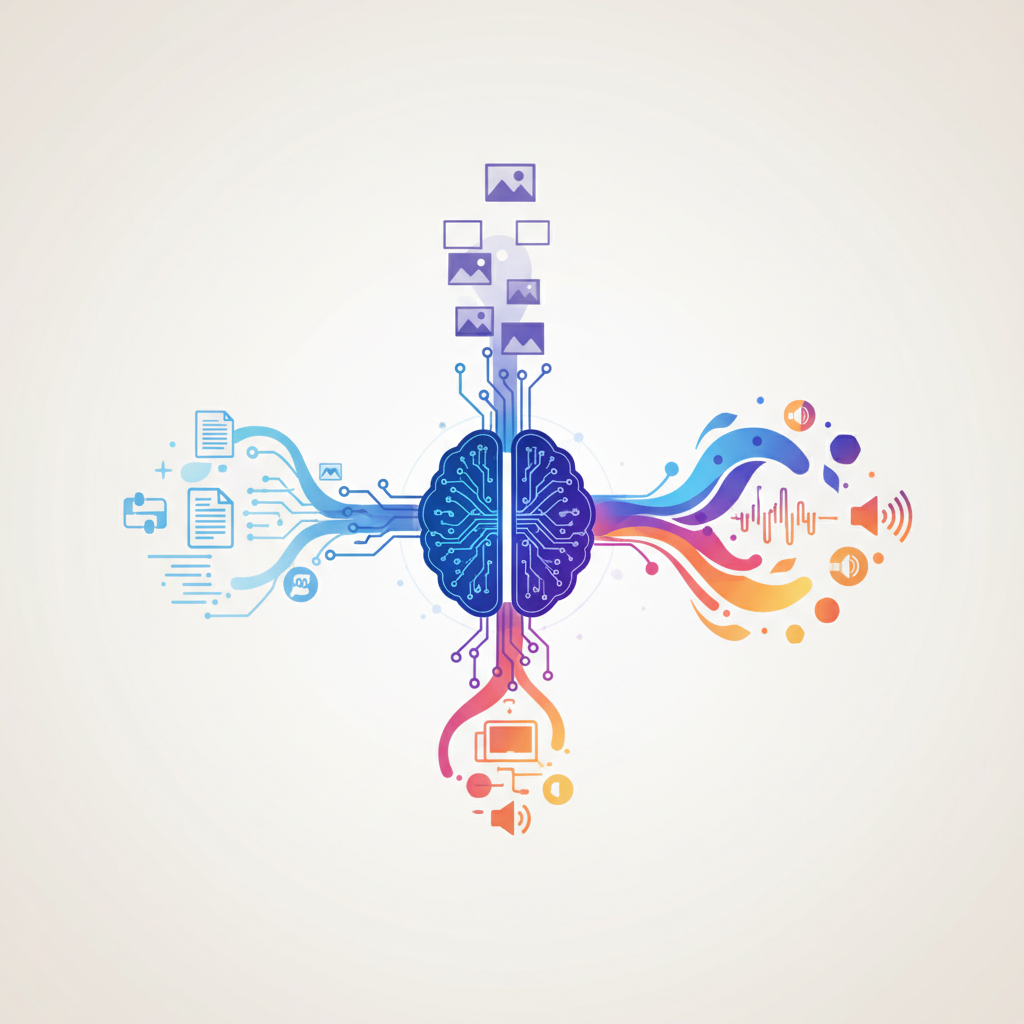

Multimodal models represent a significant advancement in artificial intelligence, bridging the gap between different forms of data: text, images, and sound. Unlike traditional models that specialize in a single mode of information, multimodal models can understand, process, and generate content across various media, offering a richer, more integrated understanding of the world.

What Are Multimodal Models?

At their core, multimodal models are designed to handle multiple forms of data simultaneously. This capability allows them to capture the complexity of human communication and the nuanced ways our senses interact with the environment. By combining text, image, and audio data, these models can achieve a more holistic understanding of content and context, leading to more accurate and relevant outcomes.

Why They Matter

The integration of multiple data types opens up unprecedented possibilities in AI applications. From enhancing accessibility for people with disabilities to powering creative tools and improving search engines, multimodal models are transforming how we interact with technology.

Key Concepts and Technologies

- Embeddings: Multimodal models use embeddings to convert different types of data into a common format that can be processed together. This enables the model to understand and relate to disparate forms of information.

- Cross-attention mechanisms: These allow the model to focus on relevant aspects of one modality when processing another, such as identifying relevant visual features when processing text.

- Joint representation learning: This approach learns to integrate and represent multiple data types together, facilitating tasks that require understanding the relationships between text, images, and sounds.

Applications and Examples

Concrete examples of multimodal models in action include image captioning, where models generate descriptive text for images; text-to-image generation, where text prompts are used to create relevant visuals; speech-to-text conversion enhanced with visual context; and comprehensive video understanding that integrates visual and audio cues.

Major Architectures and Approaches

Notable approaches in multimodal modeling include CLIP-style contrastive learning, which matches images with relevant text; encoder–decoder setups that translate between modalities; and vision-language models that understand and generate content based on both text and images. Audio-text models are also emerging, offering new ways to integrate sound with other forms of data.

Real-World Systems and Applications

Examples of multimodal models in the real world range from accessibility tools that provide image descriptions for the visually impaired, to educational software that integrates multiple forms of media to enhance learning, and creative tools that enable new forms of artistic expression.

Current Limitations and Challenges

Despite their potential, multimodal models face significant challenges, including the need for large, diverse datasets; the risk of inheriting and amplifying biases; issues with robustness and susceptibility to hallucinations; and ensuring alignment with human intent.

Looking Forward

The future of multimodal models holds promise for even more unified approaches that seamlessly integrate text, images, and sound in real-time. This could revolutionize areas such as work, creativity, and how we interact with technology, underscoring the importance of ongoing research and development in this field.