Introduction

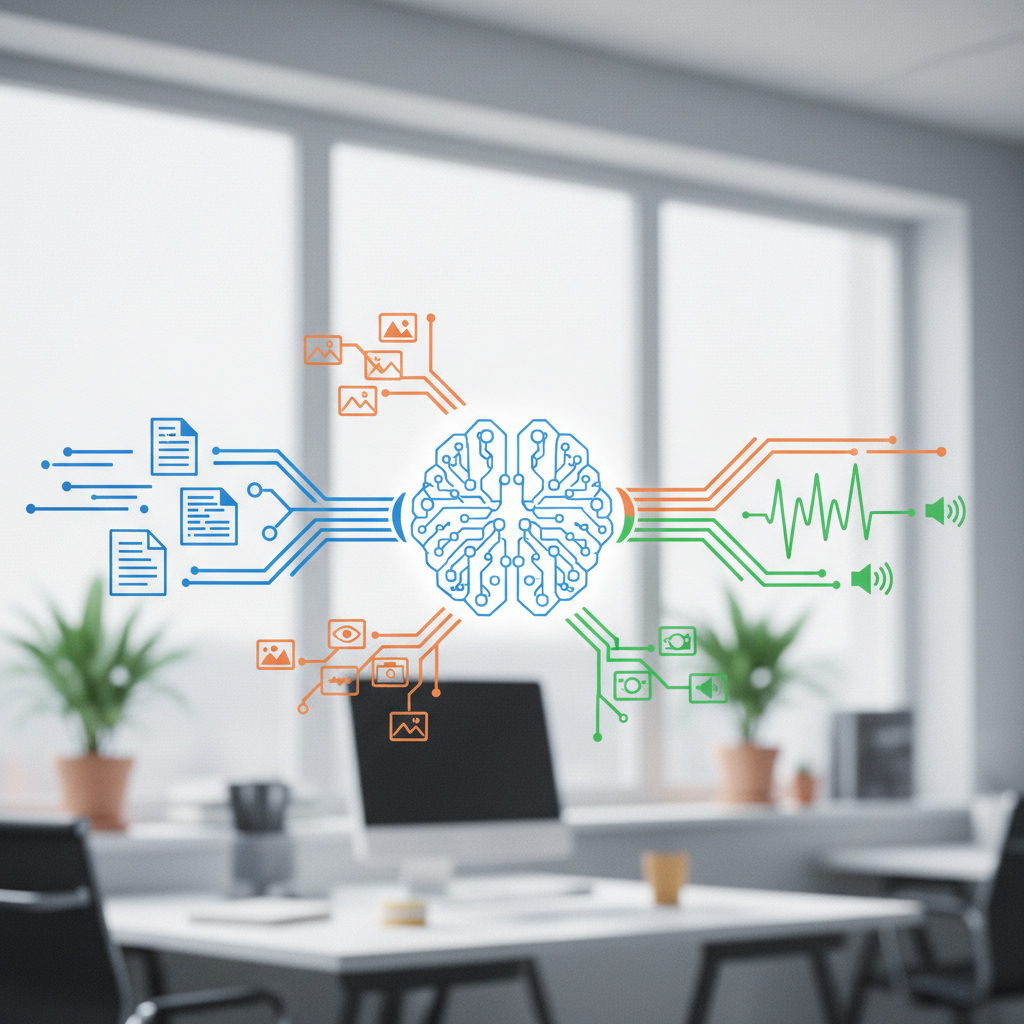

Multimodal models represent a leap forward in the field of artificial intelligence, merging the understanding of text, images, and sound into a cohesive framework. These models are designed to process and interpret data from multiple modalities, or forms of input, simultaneously. This capability marks a significant departure from traditional single-modal models, which are limited to understanding one type of input at a time.

What Are Multimodal Models?

At their core, multimodal models are AI systems that can understand, generate, or translate information across different forms of data. This means they can grasp the nuances of a picture, the semantics of a sentence, and the tones of a sound clip, all within the same model.

Why Do Multimodal Models Matter?

The integration of multiple senses allows these models to achieve a more comprehensive understanding of the world, much like humans do. By processing information from various sources, these systems can perform more complex tasks, provide richer interactions, and offer insights that would be impossible with single-modal approaches.

Key Concepts in Multimodal Learning

Embeddings: Multimodal models convert different types of data into a common format known as embeddings. These embeddings allow the model to process text, images, and audio in a unified manner.

Cross-Attention Mechanisms: These mechanisms enable the model to focus on relevant parts of the input from different modalities, improving its ability to understand and generate content.

Joint Representation Learning: This refers to the process of learning a shared representation for different types of data, facilitating seamless interaction between them.

Real-World Applications

From image captioning and text-to-image generation to speech-to-text and video understanding, multimodal models are revolutionizing a wide range of fields. They also play a crucial role in developing assistive technologies for the visually and hearing impaired, as well as in creating innovative creative tools.

Recent Advances and Notable Architectures

Recent years have seen remarkable progress in the development of multimodal models. Notable architectures include transformer-based systems that excel in handling complex interactions between text, images, and sound. These models have set new benchmarks in tasks like automated translation, content creation, and more.

Challenges and Considerations

Despite their potential, multimodal models face challenges such as data alignment, managing bias, ensuring accurate evaluation, and scalability. Addressing these issues is crucial for advancing the field and maximizing the benefits of these systems.

Future Directions

The future of multimodal models is bright, with potential impacts on everyday tools, accessibility, and human–AI interaction. As these models become more advanced, they promise to make technology more intuitive and accessible for everyone.